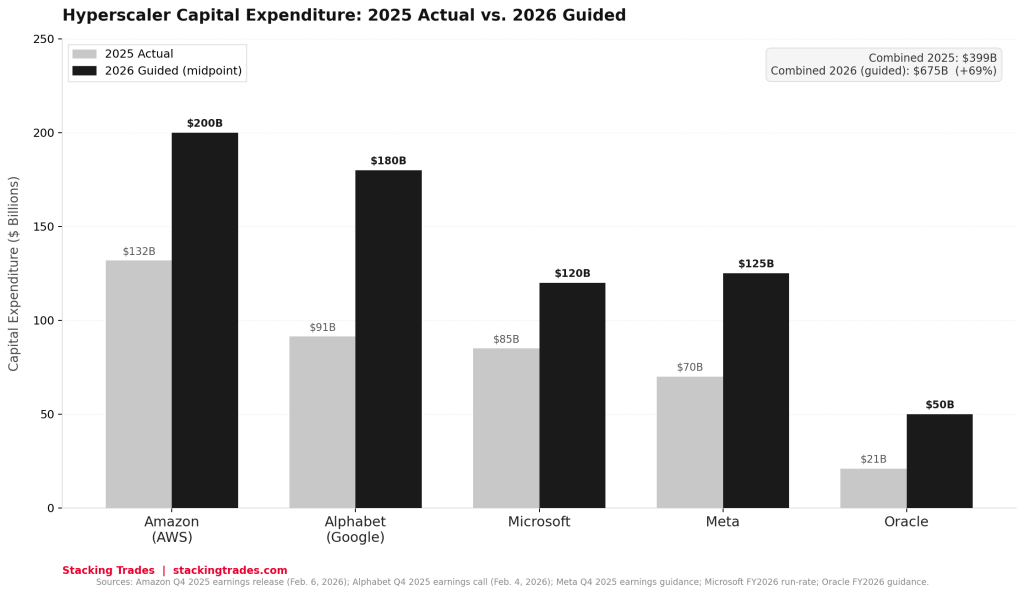

The number that stopped investors cold was not a loss or a miss. It was a capex forecast. When Amazon reported fourth-quarter earnings on February 6, CEO Andy Jassy committed to spending $200 billion in capital expenditures across Amazon in 2026 — more than the company generated in operating cash flow in 2025. Within days, Alphabet had disclosed plans for $175 billion to $185 billion in its own 2026 capex spend. Meta had already told investors it would invest between $115 billion and $135 billion. Microsoft is tracking toward $120 billion or more. Oracle has guided to $50 billion, a 136% increase over 2025.

Add those five figures together and you arrive at a number the technology industry has never seen before: roughly $660 billion to $690 billion in committed capital expenditure from a single cohort of companies, in a single calendar year, almost entirely directed at artificial intelligence infrastructure. Data center capital expenditures industrywide grew 57% in 2025 to $726 billion, the fastest growth Dell’Oro Group has recorded since it began tracking the statistic in 2014. The research firm now estimates the sector will cross the $1 trillion threshold in 2026 — a milestone it had previously projected would not arrive until 2029.

For investors focused on public markets, the numbers generate an obvious question about free cash flow and return timelines. For investors who think in terms of private markets and emerging sectors, the more important question is about the second-order effects: who builds the data centers, who supplies the power, who makes the cooling systems, who lays the fiber, and whether any of those positions are available at reasonable valuations before the buildout completes.

What the CEOs Actually Said

The primary source record on this spending cycle is unusually explicit. Jassy did not hedge his guidance in the Q4 earnings release. The precise language, as reported across multiple transcripts from the February 6 call: “With such strong demand for our existing offerings and seminal opportunities like AI, chips, robotics, and low earth orbit satellites, we expect to invest about $200 billion in capital expenditures across Amazon in 2026, and anticipate strong long-term return on invested capital.” On the call itself, Jassy added that the spending is “predominantly in AWS” and “most of it is in AI.” AWS CEO Matt Garman, in a separate interview, was more pointed: even with the $200 billion commitment, he said, the company expected to remain capacity constrained for the next several years.

Alphabet’s guidance was similarly unambiguous. CEO Sundar Pichai described a company operating under supply constraints even as it ramps. “We’ve been supply constrained even as we’ve been ramping up our capacity,” Pichai said on the Q4 call. “Obviously, our CapEx spend this year is an eye toward the future.” Alphabet’s finance chief Anat Ashkenazi told analysts the $175 billion to $185 billion range would go toward AI compute capacity for Google DeepMind, cloud customer demand, and strategic investments. Google Cloud reported a contracted backlog of $240 billion at the end of 2025, up 55% quarter-over-quarter. Amazon’s equivalent figure was $244 billion, up 40% year-over-year. The backlog figures matter because they represent signed customer contracts, not optimistic projections — the infrastructure being built already has buyers.

“With such strong demand for our existing offerings and seminal opportunities like AI, chips, robotics, and low earth orbit satellites, we expect to invest about $200 billion in capital expenditures across Amazon in 2026, and anticipate strong long-term return on invested capital.”

— Andy Jassy, President and CEO, Amazon, Q4 2025 Earnings Release, February 6, 2026

Why Consensus Keeps Getting This Wrong

One of the more instructive patterns in the AI infrastructure cycle is how consistently Wall Street has underestimated hyperscaler capex. Goldman Sachs Research noted that consensus capex estimates for the hyperscaler group proved too low in both 2024 and 2025 — in each year, analysts entered the period projecting roughly 20% growth and the actual figure exceeded 50%. Before Amazon’s February guidance, the broad Street expectation for its 2026 capex had been in the mid-$140 billions. The $200 billion disclosure was not a modest upward revision. It was a rewrite of the investment thesis.

The structural reason for the consistent underestimation is that the demand signal arrives in the form of contracted backlog rather than signed revenue — it is visible in earnings calls but not in income statements, and analysts who model from reported financials lag the companies’ own forward visibility. Amazon and Google both entered 2026 knowing the infrastructure they were commissioning already had committed buyers at the other end. The CEOs were not guessing at demand. They were telling investors what the order book already showed.

The Capex That Never Stops at the Hyperscaler

Every dollar of AI data center investment moves through a supply chain before it shows up in a server rack. The approximate breakdown of hyperscaler AI capex — roughly 35% to GPU and server hardware, with the remaining 65% distributed across land, construction, power infrastructure, cooling systems, networking, and facility equipment — means the $450 billion or so directed specifically at AI infrastructure in 2026 will generate concentrated demand across multiple adjacent sectors. Nvidia captures an estimated 90% of the AI accelerator portion of that hardware spend. The rest flows into categories that are harder to invest in directly but no less consequential.

Power is the most frequently cited constraint. Global data center electricity consumption is projected to roughly double between 2022 and 2026, according to the International Energy Agency, with AI driving the acceleration. The energy requirement for AI training runs and inference at hyperscaler scale has made long-term power purchase agreements and direct utility partnerships a competitive necessity, not an operational preference. Companies with contracted renewable generation capacity, transmission infrastructure access, or geographic positioning near underutilized grid capacity have begun attracting a category of attention from the hyperscalers that would have seemed implausible two years ago.

Cooling is the second physical constraint. High-density GPU clusters generate heat at rates that conventional air-cooling architectures struggle to manage economically. Liquid cooling, immersion cooling, and hybrid thermal management systems have moved from niche deployments to line items in hyperscaler procurement plans. The firms supplying those systems, and the industrial engineering companies capable of integrating them at data center scale, are beneficiaries of the buildout in a way that is structurally different from GPU exposure — less visible, lower multiple risk, and with customer relationships that tend to be stickier than commodity hardware procurement.

Construction and real estate form the third layer. A data center at the scale Alphabet and Amazon are commissioning requires not just land and buildings but power substations, fiber entry points, water rights for cooling, and in some jurisdictions, direct engagement with municipal governments on grid capacity expansion. The firms capable of executing that development pipeline at speed — and at the quality specifications hyperscalers require — are operating in a seller’s market for their services. This context is worth keeping in mind when evaluating the Terafab consortium’s ambitions: as our prior analysis noted, building semiconductor fabs at scale shares many of the same physical bottlenecks as data center construction, compressed timelines against a backdrop of constrained specialized labor and supply chains that are already stretched.

The Return Question Nobody Can Answer Yet

The aggregate commitment is not being made blindly, but neither is it risk-free. Microsoft’s Amy Hood made an argument on the January 28 earnings call that has become something close to the official position of the hyperscaler cohort: the capital spending creates competitive positioning that no single revenue metric captures. That framing is defensible and probably correct. It is also the kind of argument that does real work when returns take time to materialize.

The most direct test of the thesis is whether cloud revenue growth can sustain or accelerate as AI infrastructure comes online. AWS grew 24% year-over-year in Q4 2025, its fastest rate in 13 quarters. Google Cloud grew 28% for the full year 2025 and reported a $70 billion annualized run rate. Microsoft Azure grew 39% year-over-year with AI contributing an estimated 13 to 16 percentage points. The growth rates justify the investment only if they hold or improve while the new capacity is being absorbed — and the contracted backlog figures from both Amazon and Alphabet suggest that the demand is booked, even if it has not yet been fully recognized in revenue.

The more nuanced concern, flagged in earnings commentary and analyst notes, is whether the agentic AI revenue cycle being tracked by enterprise software companies — the subject of a recent analysis here — translates into durable compute demand or represents a wave of consumption that plateaus as enterprises optimize their token usage. Salesforce disclosed that its Agentforce platform processed nearly 20 trillion tokens cumulatively. Microsoft confirmed 15 million paid Copilot seats. Those numbers create GPU demand now. Whether they create infrastructure-level demand at the scale the hyperscalers are commissioning depends on whether agentic AI adoption broadens beyond the early enterprise cohort — a question no quarterly report has fully answered.

Where the Investment Signal Actually Points

For investors tracking the infrastructure buildout rather than the application layer, the practical challenge is that the most direct beneficiaries — Nvidia, the major hyperscalers themselves, TSMC — are already priced with significant AI assumptions embedded. The second-order plays are less obvious and carry different risk profiles.

Data center REITs and independent data center operators that can absorb hyperscaler colocation or wholesale demand are one category. The hyperscalers do not own all the infrastructure they use. Leased capacity from independent operators, particularly in markets where land and power costs favor third-party development, remains a meaningful part of the buildout. Power generation and grid infrastructure companies with contracted positions in high-demand markets represent another category, particularly as hyperscaler demand begins to drive active utility partnerships rather than passive grid connections. Industrial firms with specialized competencies in liquid cooling, modular power systems, and large-scale electrical infrastructure are a third layer — less visible in AI narratives but directly exposed to the capital being deployed.

None of these are simple or liquid positions. The most accessible entry points remain the hyperscalers themselves, where the capex guidance is unusually explicit and the revenue trajectory is, at least for now, validating the investment thesis. The harder work is identifying which second-order positions are available before the broader market catches up to the scale of what is being built — and before the infrastructure spending shows up fully in the revenue line of every company in the supply chain.

What to Watch Next

- Microsoft Q3 FY2026 earnings, expected April 29 — Azure guidance of 37–38% growth was provided for the quarter. Any commentary on capacity constraints, or a revision to the capex outlook, will be the most current read on whether infrastructure demand is tracking ahead or behind the $120 billion-plus spend plan.

- Amazon and Google Q1 2026 earnings — Both companies will report in late April. The backlog figures — $244 billion for Amazon, $240 billion for Google — are the key variables to watch. Growth in contracted backlog would confirm that the 2026 capex is being underwritten by real customer commitments, not speculative capacity.

- Power purchase agreement disclosures — Hyperscalers are increasingly announcing long-term energy deals alongside data center expansions. Each PPA announcement signals a new facility entering the pipeline. The geography of those deals also reveals which electricity markets are becoming AI infrastructure hubs.

- Nvidia’s next earnings and supply guidance — Nvidia capturing approximately 90% of AI accelerator spend means its forward order visibility is the closest proxy for how much of the hyperscaler capex is converting into actual hardware orders. Any commentary on lead times or allocation constraints will reflect the true pace of the buildout.

- Independent data center operator earnings — Companies like Equinix and Digital Realty that lease capacity to hyperscalers should begin showing demand acceleration in their forward booking and pricing commentary as the 2026 commitments flow through procurement. A sustained pricing uptick in wholesale and hyperscale colocation would confirm the supply-demand dynamic implied by the capex figures.

- Whether consensus capex estimates are revised upward again — Goldman Sachs Research noted that consensus has underestimated hyperscaler capex in both 2024 and 2025. If Q1 2026 earnings commentary suggests the current $660–690 billion aggregate estimate is again too conservative, it would extend the pattern that has defined the AI infrastructure cycle from the start.