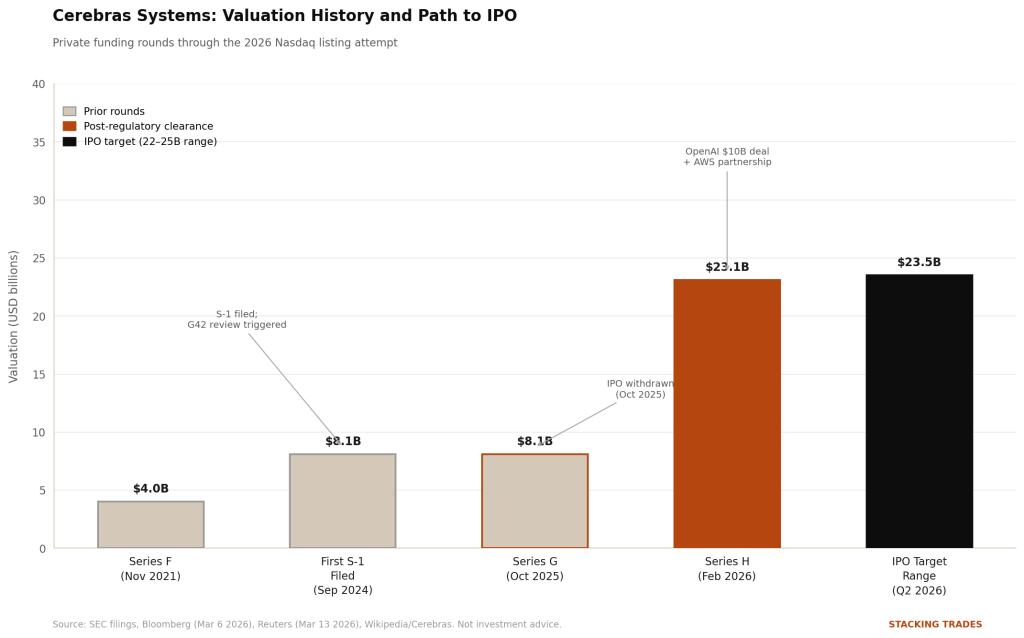

The last time Cerebras Systems tried to go public, it withdrew its registration statement in October 2025 — days after closing a funding round — citing an unresolved national security review of a minority investment from Abu Dhabi-based technology firm G42. The optics were not ideal. The company’s first prospectus had revealed that a single foreign customer represented roughly 87% of its revenue through the first half of 2024, and federal regulators wanted to understand what that relationship meant for sensitive American compute infrastructure.

That chapter is closed. G42 has since been removed from Cerebras’s primary shareholder structure to satisfy U.S. regulators, and the company has spent the months since building a customer base that looks nothing like the one in that first S-1. In January 2026, Cerebras signed a $10 billion compute deal with OpenAI, pledging 750 megawatts of computing capacity through 2028. In March, it announced a partnership with Amazon Web Services to deploy its CS-3 systems inside AWS data centers, available through Amazon Bedrock. The company is now valued at $23.1 billion after a February Series H round and is targeting a roughly $2 billion raise on the Nasdaq, with Morgan Stanley as lead underwriter, according to Bloomberg.

The public S-1 has not yet been filed as of this writing. But the architecture of the deal — the timing, the customer lineup, the deliberate sequencing of announcements — reads like a company that understands exactly what a prospectus needs to say.

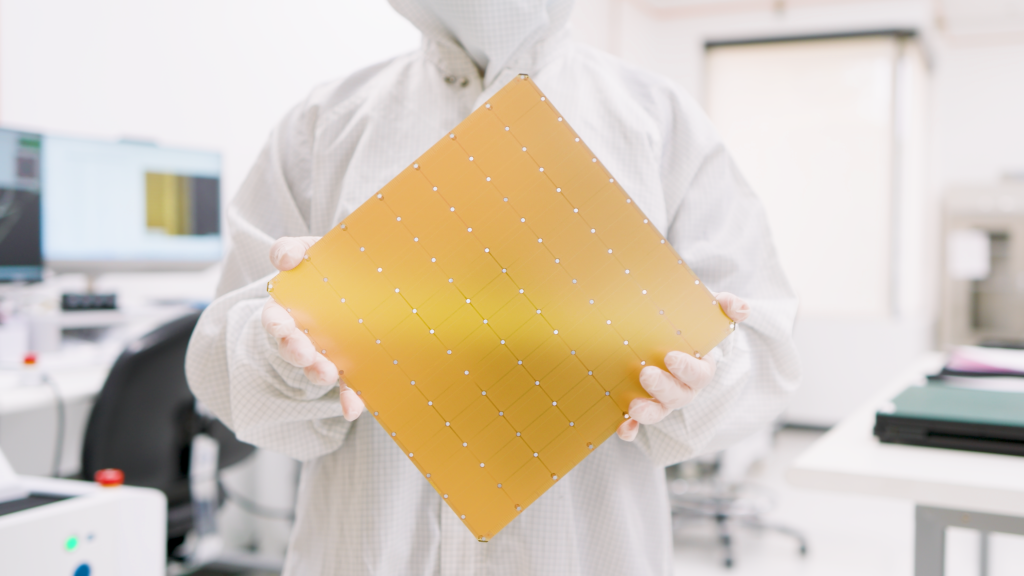

The Chip That Doesn’t Fit the Nvidia Model

To understand why Cerebras matters to investors, you need to understand why it is structurally different from every other AI chip company trying to go public right now. Nvidia’s dominant GPU architecture works by connecting hundreds or thousands of discrete chips — each physically small — through high-bandwidth memory and fast interconnect. The bottleneck in that approach is data movement: getting information from one chip to another, from memory to processor, fast enough to keep pace with the model’s demands.

Cerebras built the WSE-3 from the other direction. The chip is a single processor the size of an entire 300mm silicon wafer — roughly 56 times the physical area of Nvidia’s H100. It contains 4 trillion transistors, 900,000 AI-optimized cores, and 44 gigabytes of on-chip SRAM with 27 petabytes per second of internal memory bandwidth. Because the model weights live on the chip itself rather than in external memory, there is no bottleneck to solve. The machine simply runs faster — particularly in inference tasks where an AI system is generating responses to live queries, rather than training on new data.

The practical result: Cerebras delivered Llama 4 Maverick inference at more than 2,500 tokens per second per user on its CS-3 system, compared to roughly half that on Nvidia’s flagship DGX B200 Blackwell running the same 400-billion parameter model. For applications like agentic coding tools — where a developer is waiting for multi-step AI reasoning in real time — that difference is meaningful.

“Every customer large or small is on AWS, from individual developers to the largest banks in the world. The deal will make it easy as a click to get on Cerebras.”

— Andrew Feldman, CEO, Cerebras Systems, Reuters, March 13, 2026

The Amazon Deal Changes the Distribution Equation

For most chip startups, hardware reach is the hardest problem. You can build the fastest processor in the world and still lose if your customers can’t access it through the infrastructure they already use. The AWS partnership, announced March 13, addresses that directly. Under the arrangement, Cerebras CS-3 systems sit inside AWS data centers and operate alongside Amazon’s own Trainium3 chips in a so-called disaggregated inference architecture — Trainium handles the prefill stage of a query, Cerebras handles the decode. AWS calls the result five times the high-speed token capacity in the same hardware footprint. The service, running on Amazon Bedrock, is expected to launch in the second half of 2026.

The significance for Cerebras is distribution at a scale no startup can build independently. AWS serves customers ranging from individual developers to global financial institutions. When David Brown, Vice President of Compute and ML Services at AWS, said publicly that the Trainium-Cerebras solution will deliver “inference that’s an order of magnitude faster and higher performance than what’s available today,” that is not a press release formality. It is a co-endorsement from the world’s largest cloud provider, delivered weeks before an IPO roadshow.

Cerebras has also inked IBM and the U.S. Department of Energy as customers, alongside OpenAI, Cognition, and Mistral. The customer concentration risk that sank the first S-1 story has been structurally dismantled. The question is whether the new customer roster can support the valuation.

The Valuation Math Is Tight

At the $23 billion figure established in the February Series H, Cerebras would debut as one of the ten largest semiconductor IPOs in history, priced ahead of its current revenue. Estimated 2025 revenues exceeded $1 billion according to multiple analyst reports, but the company’s cost structure — proprietary water-cooled hardware, TSMC wafer manufacturing, and a software stack that requires developers to leave Nvidia’s CUDA ecosystem — is not cheap to operate.

The CUDA problem is worth understanding. Nvidia’s developer ecosystem is the deepest competitive moat in the chip industry. Tens of thousands of enterprise AI teams write code specifically for CUDA; switching to Cerebras’s software stack requires retraining and re-tooling. The company’s inference API — which lets developers access wafer-scale performance through a standard cloud interface without buying hardware — is designed to lower that barrier. But it does not eliminate it. For institutional investors pricing the IPO, the question is how many enterprise customers will opt for Cerebras performance at a premium over Nvidia compatibility at a discount.

The Amazon integration changes that calculus somewhat. If developers can access Cerebras hardware through a standard Bedrock API call — the same interface they already use for other AWS AI services — the switching cost drops considerably. That may be the single most important structural fact about the March 13 announcement, and it is likely to feature prominently in the S-1.

What the Second Attempt Gets Right

Cerebras has learned from the timing mistake of the first filing. The original S-1 landed in September 2024 into a national security review it could not resolve quickly. The company tried to wait it out, raised capital to extend its runway, and ultimately withdrew. This time, the regulatory pathway was cleared before the filing, the key customer relationships were announced in sequence — OpenAI in January, Amazon in March — and the underwriter was selected before the formal S-1 submission.

The IPO window for Q2 2026 is not guaranteed to stay open. Market volatility and macro uncertainty can compress or shut the calendar quickly, as the broader IPO market has demonstrated multiple times in the last 18 months. The company’s Nasdaq ticker reservation — CBRS — has been held since the first filing. Whether it gets used in April or slides to June will depend on when the public S-1 drops and how investor appetite looks after the bank earnings season that begins the week of April 13.

What is clear is that Cerebras is no longer asking the market to fund a technical bet on an unproven architecture. It is asking the market to value a company that OpenAI, Amazon, IBM, and the U.S. Department of Energy have already paid to use.

WHAT TO WATCH NEXT

- The public S-1 filing on SEC EDGAR — expected in late April or early May before any roadshow; it will contain the first audited revenue figures, cost structure, and TSMC manufacturing dependency disclosure.

- AWS Bedrock launch date for the Trainium-Cerebras disaggregated service — the second-half 2026 window is wide; an earlier-than-expected rollout would strengthen the IPO narrative heading into pricing.

- Nvidia’s response — Reuters reported Nvidia is expected to combine its own GPU chips with Groq (acquired for $17 billion in December 2025) in a similar disaggregated inference architecture. That announcement, if it arrives before Cerebras prices, directly affects how investors frame the competitive risk.

- OpenAI contract execution milestones — the $10 billion agreement runs through 2028, but delivery is staged; any disclosure of compute capacity actually deployed versus committed will be the most meaningful revenue signal in the S-1.

- TSMC wafer allocation — Cerebras uses nearly an entire 300mm wafer per chip and competes directly with Apple and Nvidia for TSMC manufacturing capacity; any tightening in that supply chain is a direct production risk.